What sort of CPU is best for music and audio production? Will your DAW benefit from more cores or a higher clock speed? Read on to find out. . .

The CPU, or central processing unit, is at the heart of every computer. It's the bit of your computer that does most of the actual, well, computing. Getting the right sort of CPU for the sort of work you're doing is therefore really important. But music and audio production puts some very particular demands on a computer, and not every CPU is right for the job.

So in this article I'll explore how a CPU works (in brief, don't worry!) and then explore what to look out for when buying one for music and audio work specifically.

CPUs: the Basics

The CPU's job in any computer is to crunch numbers, perform logic operations, control disk I/O (input and output) and tell the other hardware in your system what to do. Software and hardware devices are constantly sending instructions to the CPU. It's the CPU's job to prioritise and execute those instructions in the most efficient way possible. Retrieving those instructions from memory, interpreting them, and storing or passing on their results is what's called a fetch-decode-execute cycle or instruction cycle.

A modern CPU can carry out these instruction cycles concurrently on multiple cores. Each core can crunch numbers and perform logic operations independently of the others, but they usually share a single RAM controller, a pool of cache memory, and a disk I/O controller. On an actual physical level, the CPU executes instructions by opening and closing hundreds of millions of transistor gates contained within each core. A crystal oscillator — called a clock — governs the speed at which these are opened or closed.

An advertised base clock speed of 4.8GHz means that the opened or closed state of those gates can be changed 4.8 billion times a second — per core. These clock speeds are usually increased or decreased dynamically, and when a little more juice is called for on one or two cores, extra power can be diverted from the other cores. This is usually called a turbo boost.

So it stands to reason that the higher a CPU's clock speed and the more cores it has, the faster it'll be — right?

CPU Speed is. . . Complicated

Not quite. There isn't a one-to-one correlation between fetch-decode-execute cycles and clock cycles. In fact, most modern CPUs can handle several instructions per clock cycle. The number of instructions per clock (IPC) is highly dependent on the microarchitecture of the CPU. But these microarchitectures vary so much from chip to chip it's difficult to draw any real conclusions about two processors' relative speeds just by looking at their headline numbers.

Another important factor is how long a CPU core can be boosted for. This is usually related to temperature. The harder a core is pushed, the hotter it gets. If the temperature gets too high, the CPU will begin to throttle the speed. This is why thermal design power (TDP) is a useful metric to look at. The lower this number (expressed in watts), the cooler the CPU can run. And the cooler it can run, the longer it is likely to be able to maintain its boosts.

Things have been further complicated in recent years by Intel moving to a so-called "hybrid core" approach, where different cores have different capabilities. With this approach, cores are split into "Performance" and "Efficiency" cores, the latter drawing less power and thereby allowing the other cores to boost even higher. This approach can lead to some great results, but it can also cause significant problems for realtime audio work, with power-hungry plugins getting stuck on low-power Efficiency cores and causing playback to grind to a halt. This is the key reason we have decided to build our systems around AMD processors, which have so far not adopted this approach.

RAM can also be very important for CPU performance. Instructions for the CPU are held in RAM prior to being transferred to a CPU core's internal register. The speed at which those instructions can be transmitted to the CPU may determine how long your CPU has to wait around for stuff to do.

Why a CPU's Single Core Speed Matters for Audio Production

There are some monster multicore CPUs out there these days. There are AMD Threadripper chips out there, for instance, that have 96 cores — 80 more than are found in even our most powerful system! But multicore CPUs are designed to handle a lot of parallel processes all at once — and that's not the main thing we're looking for when dealing with audio.

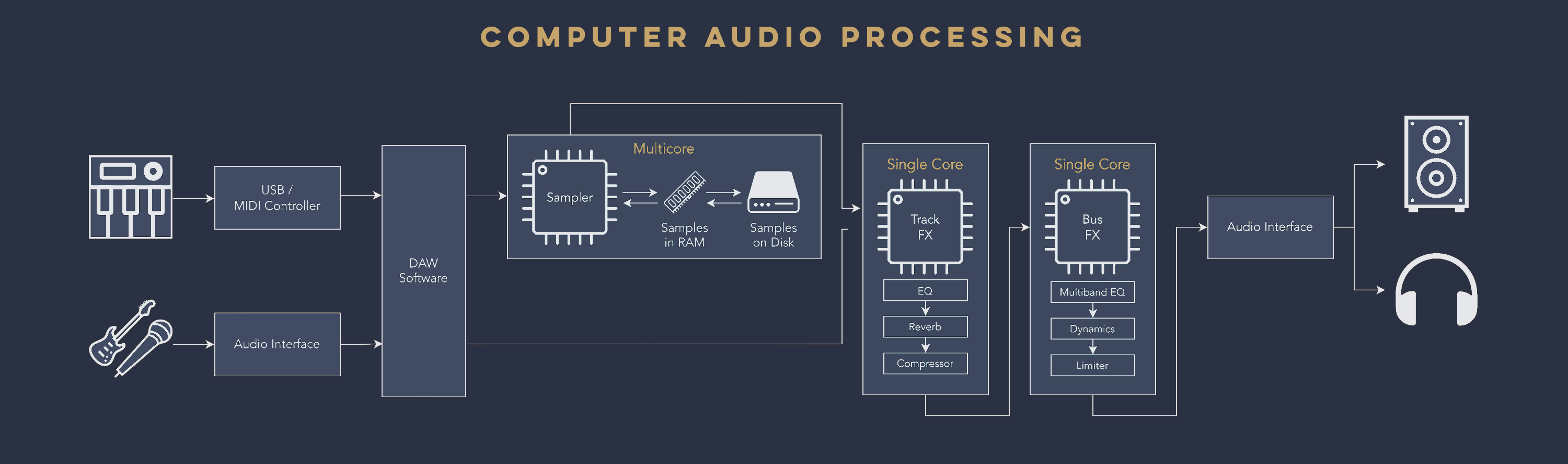

Your DAW can deal with inputs from multiple MIDI and audio tracks in parallel. Your sampler — Kontakt for instance — can also fetch and decompress multiple samples from RAM or your storage disk simultaneously. None of these processes needs to wait for other processes to finish before they can begin, so their load can easily be spread between multiple cores.

That's not the case for effects plugins. There's a reason we use the term effects chain: every effect on a track is linked to the next effect in series. Let's say that you have three insert effects on a particular track: an EQ, a reverb and a compressor. These have to be processed in sequence, one after the other, because the output of the EQ will be the input of the reverb, and the output of the reverb will be the input of the compressor.

It's All About Efficiency

Requiring the CPU to pass calculations from one core to another requires extra CPU cycles. That's why it's usually more efficient to process a whole effects chain on one core. While multiple tracks can of course be processed side-by-side, each individual track will need to complete its calculations — including processing all its effects — on a single core. Once these tracks have been fully calculated they might be routed to a bus track where yet more effects will be applied. Again, your CPU can work on several of these buses in parallel, but each individual bus needs to be completely processed on a single core.

Increasing the size of our audio buffer can give the CPU a bit more headroom to do its calculations. But doing so introduces latency, which we want to avoid as much as possible when dealing with realtime audio.

The thing to remember is that any core which is unable to complete its calculations in time will cause errors in your audio stream. This might take the form of stuttering, pops, or your audio engine just stopping altogether. And this is why musicians often find themselves tearing their hair out as a project completely overwhelms their processor — even as their resource monitor shows multiple cores sitting completely idle.

It's not just about how many cores you have — it's about how capable your individual cores are under pressure.

Picking Up the Thread

One other term you may hear from time to time is hyperthreading, or simultaneous multithreading. A thread is a string of instructions sent by a process to the CPU. Multithreading, as the name suggest, is a way of weaving together multiple threads so that they can run more efficiently. Only one thread can run at a time on each core — but when multithreading is in use, the processor is presented with a group of threads, each one contending for access to that core. If the CPU starts executing one thread and that thread runs into a delay — say, it can't find the data it needs in cache memory and has to go hunting for it in RAM — then that thread can be suspended while the data is being sought, and the next thread in the queue can do some work in the meantime.

The disadvantage of multithreading is that it can gum things up if a core is already overloaded. Older CPUs in particular may perform better in realtime audio if multithreading is switched off, but if your CPU is less than 10 years old I wouldn't recommend it. Performance will also depend greatly on the way multithreading has been implemented by your DAW or audio host. For most CPUs, it's probably best not to touch these settings, as the way each CPU handles the distribution of threads across cores is highly complex and very CPU dependent.

What to Look Out for in a CPU for Music and Audio Production

As you can see, CPUs are complex beasts. There are a lot of factors determining their performance and relative suitability for particular tasks. Lots of cores can be good, yes, but it's also really important to look at clock speed, boost speed, instructions per clock, and how long boosts can be kept going for.

And then there's multicore and single core performance. If you have a large template with lots of instruments playing concurrently, you might want to prioritise multicore performance. But if you also run numerous plugins on your tracks and buses, you're going to hit a bottleneck unless you have powerful single core performance.

Unfortunately it's not easy to tell how good a CPU might perform just by looking at the specs. That's why I recommend looking at CPU benchmarks. These put processors through their paces in something resembling real world tests. PassMark ranks CPUs both by single thread performance and overall (i.e. multicore) performance, and Geekbench has a single core and multicore chart as well. My advice when it comes to audio and music production is to look for CPUs which near the top of the single thread/single core charts — as that's the most likely choke point for modern processors — and then look for the best overall (multicore) performance from the CPUs in that group.